Let me be direct with you. I’ve seen developers, writers, and business owners all struggle with the same frustrating experience: they type something into ChatGPT or Claude, they get a mediocre response, and they walk away thinking “AI is overhyped.” But the AI isn’t the problem. The instruction is.

In Post 1 of this series, we covered what prompt engineering actually is. Today, we go one level deeper — and I’m going to give you a repeatable, structured framework that works every single time. No guesswork. No trial and error. Just consistent, high-quality output.

The quick test that reveals everything

Before we get into the framework, try this comparison. Two prompts, same AI, same topic:

The difference in output quality is not small — it is enormous. And the only variable that changed was the structure of the instruction. That structure has a name.

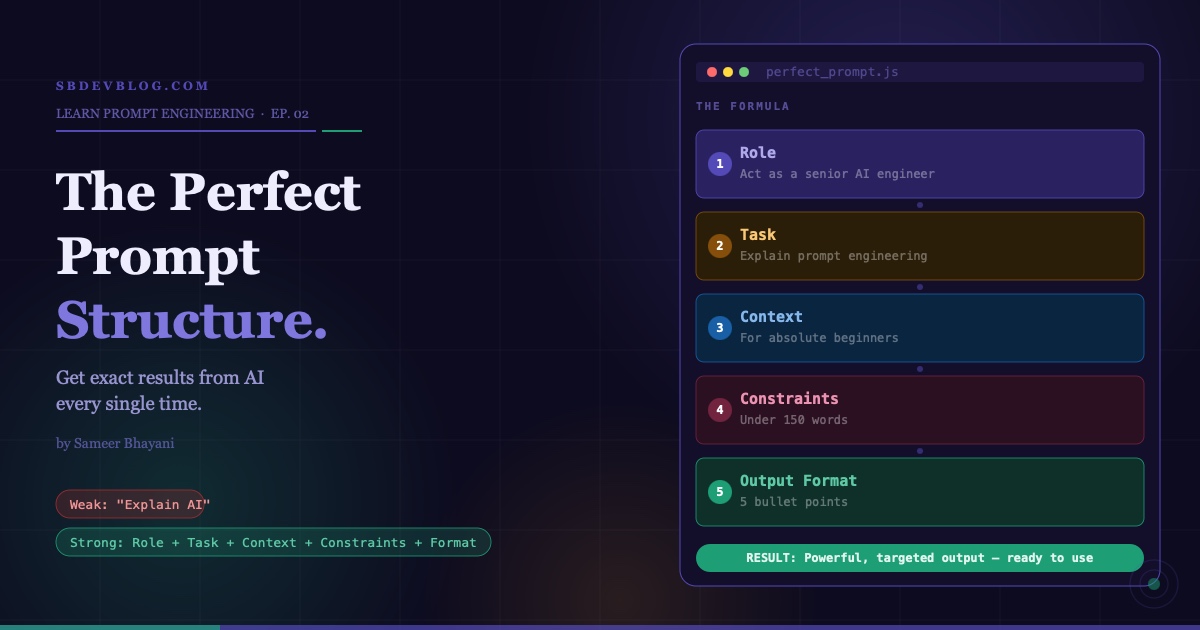

The framework: Role + Task + Context + Constraints + Output Format

This five-part structure is the backbone of professional prompt engineering. Memorise it, and you will never write a weak prompt again.

Breaking down each component

-

1Role — tell AI who it should beWhen you assign a role, the AI adapts its tone, depth, vocabulary, and style accordingly. A senior engineer explains things differently from a children’s teacher — and the AI knows that.Instead of “Explain AI” → “Act as a senior AI engineer”

-

2Task — be crystal clear about what you wantThe task is the actual deliverable. It must be a single, unambiguous instruction. Vague tasks produce vague outputs. The clearer your task, the more precisely the AI can execute it.Not “Write something” → “Explain prompt engineering in simple terms”

-

3Context — this is where most people failAI doesn’t know your situation unless you tell it. Who is this for? What level are they at? What’s the goal? Without context, the AI invents its own assumptions — and they’re usually wrong.“…for beginners who have never used AI before”

-

4Constraints — set the limits upfrontConstraints prevent AI from going off track. Word count, tone, reading level, what to avoid — these guardrails keep the output focused and usable without heavy edits.“…in under 150 words using simple language”

-

5Output Format — control how it’s presentedTelling the AI how to structure the answer means you get something you can immediately use rather than a block of unformatted prose you have to manually reorganise.“Give the answer in 5 bullet points”

Putting it all together: the full transformation

Here’s what combining all five parts looks like in practice:

Same AI. Same model. Completely different output quality. The only thing that changed is how the instruction was written. That is the power of structure.

Why this works: how AI actually processes your input

Large Language Models don’t think the way humans do. They read your input, recognise patterns from their training data, and generate the most statistically likely response. They don’t infer intent. They don’t read between the lines. They respond to what is written.

This means when your prompt is structured and specific, your result becomes predictable. When your prompt is vague, the AI fills the gaps with whatever pattern felt most likely — which almost never matches what you actually wanted.

The pro-level tip most people don’t know

Add that single line to the end of any complex prompt and something remarkable happens: the AI becomes interactive. Instead of generating a response based on assumptions, it pauses to check what you actually mean. It stops working for you and starts working with you. This one line turns a one-directional tool into a collaborative thought partner.

The most common mistake — and why it keeps people stuck

I see this constantly. Someone writes a technically correct prompt with a role, task, and output format — but leaves out the context. The AI responds competently to the general question, but it completely misses the actual use case. Context is the difference between a generic answer and a targeted one. Never skip it.

The output quality ladder

Your challenge for this Post

What’s coming in Post 03

Next up, we’re going deeper into one of the most powerful and underused techniques in prompt engineering: zero-shot vs few-shot prompting. You’ll learn how to give AI examples from your own work so it can replicate your exact style and produce outputs with dramatically higher accuracy.

The perfect prompt structure consists of five components: Role (who the AI should act as), Task (what you want it to produce), Context (background about the audience and use case), Constraints (word count, tone, and limits), and Output Format (how the response should be structured). Using all five consistently produces significantly better AI output.

AI language models don’t infer intent — they respond to what is literally written in the input. A vague prompt forces the AI to make assumptions, which usually don’t match what the user needs. A structured prompt removes ambiguity and gives the model enough information to produce a targeted, useful response on the first attempt.

Assigning a role tells the AI which persona to adopt, which directly influences tone, depth, vocabulary, and style. For example, “Act as a senior AI engineer” produces a more technical and precise response than a general instruction, because the model draws on patterns associated with that specific expertise.

Constraints define the limits of the response — word count, reading level, tone, or things to avoid. Output format tells the AI how to present the content — bullet points, numbered steps, a table, or a script. Constraints control scope; output format controls structure. Both are needed for a complete prompt.

The most common mistake is skipping the context component. People assume the AI understands their specific situation, audience, and goal without being told. Without context, the AI produces a technically correct but practically useless response. Adding context transforms generic output into targeted, actionable content.

Adding “if needed, ask clarifying questions before answering” turns a one-directional prompt into an interactive exchange. Instead of proceeding on its own assumptions, the AI pauses to confirm key details. This is especially useful for complex tasks where the output needs to closely match specific requirements.

Yes — significantly different. The underlying AI model is identical in both cases, but output quality depends almost entirely on the quality of the input. A structured five-part prompt consistently produces clearer, more relevant, and more immediately usable output than an unstructured prompt asking for the same thing.

Prompt engineering is the skill of designing AI instructions that produce consistently high-quality output. It matters because AI adoption is accelerating across every profession, and the people who can direct AI tools effectively produce dramatically better results in less time. It requires no technical background and can be applied immediately in any field.

Zero-shot prompting means asking an AI to complete a task without any examples, relying on its general training. Few-shot prompting means including one or more examples of the desired output within the prompt itself, which trains the model in-context to replicate that specific style or format. Few-shot prompting typically produces more accurate results for specialised tasks.